AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

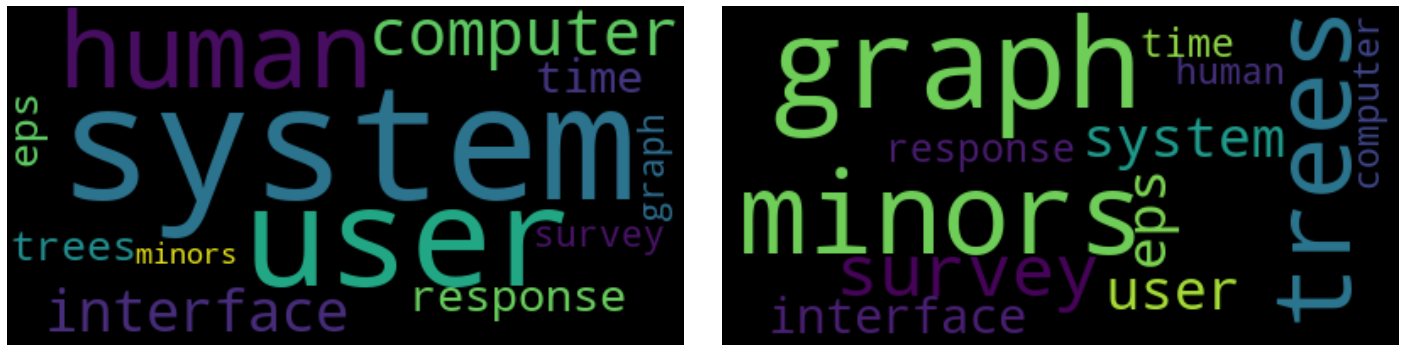

Get plain text topics from gensim lda12/24/2023 The Latent Dirichlet Allocation (LDA) technique is a common topic modeling algorithm that has great implementations in Python’s Gensim package. Topic Identification is a method for identifying hidden subjects in enormous amounts of text. I was actually wondering if this could be added as a feature to the LdaModel? After all, the topic word probabilities are stored in self.This article was published as a part of the Data Science Blogathon. For that reason I reset the rng in self.random_state to a new one with the same seed every time I call get_document_topics (I know this is not optimal, but for now in my application good also suggested to cache the assignments of already processed documents in an index. Despite a lower threshold, sometimes it happens that inference ends up in different local minima, depending on the randim initial values of gamma.Variations between subsequent inferences should then be of that order or magnitude. Siginficantly lower self.gamma_threshold to something reasonable, like 1e-10 or so (rather than the default 0.001).I am using a twofold fix for now to get reproducible results: Like pointed out, that's due to the variational Bayesian inference done in LdaModel.inference: The document topic assignments gamma are randomly initialized from self.random_state, and inference is done until the mean change of the gammas drop below self.gamma_threshold (which can be set upon instantiating the LDA model). Hi guys! First of all thanks for the great library! I have also run into the issue that subsequent inferences on the same document are not deterministic and yield slightly different results every time.

Type "help", "copyright", "credits" or "license" for more information. Model = SklLdaModel(num_topics=2, id2word=dictionary, iterations=20, random_state=1) Please see my code below as well as some bits of info about my Python, numpy, scipy, and gensim.įrom gensim.sklearn_integration import SklLdaModel Print 'The following two must have the same value (but they do not)' print ldamodel. LdaModel( corpus, num_topics = 2, id2word = dictionary, passes = 20) # convert tokenized documents into a document-term matrix corpus = # turn our tokenized documents into a id term dictionary dictionary = corpora. # remove stop words from tokens stopped_tokens = # clean and tokenize document string raw = i. # loop through document list for i in doc_set:

# list for tokenized documents in loop texts = My brother likes to eat good brocolli, but not my mother." doc_b = "My mother spends a lot of time driving my brother around to baseball practice." doc_c = "Some health experts suggest that driving may cause increased tension and blood pressure." doc_d = "I often feel pressure to perform well at school, but my mother never seems to drive my brother to do better." doc_e = "Health professionals say that brocolli is good for your health." # compile sample documents into a list doc_set =

# create sample documents doc_a = "Brocolli is good to eat. # Create p_stemmer of class PorterStemmer p_stemmer = PorterStemmer() # create English stop words list en_stop = get_stop_words( 'en') porter import PorterStemmer from gensim import corpora, models import gensim tokenizer = RegexpTokenizer( r'\w+') tokenize import RegexpTokenizer from stop_words import get_stop_words from nltk.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed